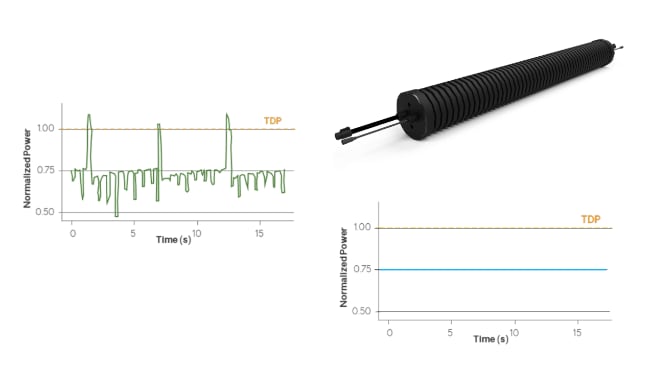

AI data centers are pushing power infrastructures to their limits, with ultra‑fast load steps from GPU clusters causing severe voltage sags and nuisance trips. Supercapacitor‑based systems such as Capacitech’s C‑Link modules add a fast, high‑power buffer that stabilizes the DC bus during these transients. This enables operators to increase rack power density and AI utilization without massive over‑provisioning of batteries, UPS systems, or grid capacity.

Why AI data centers struggle with voltage sags

AI and accelerated compute workloads create highly dynamic, spiky power demand rather than smooth, predictable profiles. GPU clusters can step from idle to several kilowatts per server in milliseconds, driving abrupt current increases along the entire power chain.

These rapid load steps can lead to:

- Voltage sags on distribution buses when power electronics and upstream sources cannot ramp fast enough

- Nuisance tripping of breakers and protection devices due to transient overcurrent and inrush events

- Stress and premature aging of PSUs and UPS systems operated far from their optimum efficiency region

- Unexpected server resets or errors when supply rails drop below allowable limits, even for a few milliseconds

Traditional battery‑based backup solutions are optimized for energy, not instantaneous power, and often react too slowly to fully catch microsecond‑to‑millisecond events. Supercapacitors fill this gap by delivering very high current on short timescales, effectively bridging between the fast transient and the slower response of UPS, generators, and the grid.

Key features and benefits of C‑Link supercapacitor modules

Capacitech’s C‑Link solution is a modular supercapacitor‑based energy storage system designed as a power‑quality layer for data centers. It focuses on high power handling and millisecond‑class response rather than long‑duration backup.

Key characteristics include:

- Millisecond response time to voltage sags and transient events on data center buses

- High power density for large, short‑duration current pulses during AI workload spikes

- Modular architecture that can be scaled with rack count, power density, or AI adoption levels

- Flexible physical deployment on walls, fences, or cable trays, preserving valuable white‑space and rack area

- Integration as an add‑on layer in existing power architectures, minimizing need for civil works or major retrofit construction

From an operator’s perspective, the main benefits are:

- Stabilized bus voltage during AI load spikes, reducing server glitches and downtime risk

- Less need to oversize UPS or battery systems purely for peak power rather than energy autonomy

- Improved utilization of existing infrastructure by smoothing the instantaneous power profile seen by the grid connection and backup systems

- Faster deployment (described as months rather than years) compared to large‑scale infrastructure reinforcement according to the manufacturer’s material

| Feature | C‑Link Modules | Batteries (Li‑Ion, LTO) | Supercapacitor OEMs | Flywheels |

|---|---|---|---|---|

| Response Time | Milliseconds | Seconds to minutes | Milliseconds | Milliseconds to seconds |

| Installation Time | Days | Months | Weeks to months | Weeks |

| Installation Requirements | Traditional, wall, fence, cable trays; single technician | Cabinets, concrete pads, cranes, heavy equipment | Cabinets, concrete pads, cranes, heavy equipment | Heavy foundation, specialized installation |

| Permitting Complexity | Minimal | Extensive (lithium safety) | Minimal to moderate | Complex |

| Operational Life | 10–20 years | 5–10 years | 10–20 years | 10–20 years |

| Maintenance | Minimal | Moderate to high | Minimal | High |

| Scalability | Add/relocate modules | Limited | Limited | Limited |

| Cost per kW | Low | High (oversized) | Low to moderate | High |

| Best Use Case | Millisecond surges, high power | Long discharge cycles | Millisecond surges, high power | Medium duration |

| Space Efficiency | Modular, flexible placement | Cabinet and container based | Cabinet and container based | Large footprint |

Typical applications in data center power paths

Supercapacitor modules like C‑Link fit best where transient power events are most harmful but durations remain short. In AI data centers, this typically aligns with high‑power but brief GPU load surges and simultaneous rack startup sequences.

Representative use cases include:

- AI‑focused racks with dense GPU configurations that exhibit steep load ramps and frequent workload‑driven spikes

- DC bus stabilization at intermediate distribution levels (for example 380 V or 48 V DC segments) to buffer transient currents

- Support for UPS systems by offloading the most aggressive power spikes, allowing batteries to handle longer events

- Protection of switchgear and power distribution units against current spikes that would otherwise cause nuisance tripping

- Retrofit upgrades in existing facilities where grid capacity or UPS scaling is constrained, but AI demand is increasing

Because the modules handle very short‑term events, they are not a replacement for traditional UPS or generator backup. Instead, they complement those systems by providing a high‑speed “shock absorber” in front of them.

Design‑in notes for engineers

From a design engineer’s standpoint, integrating supercapacitor modules into a data center requires coordinating electrical, mechanical, and operational aspects. The C‑Link concept addresses these areas but still needs project‑specific engineering.

Consider the following points during design‑in:

- Define the transient profile

Characterize worst‑case load steps from AI workloads: magnitude, rise time, duration, and repetition. This determines the required supercapacitor power and energy, as well as allowable voltage droop on the bus. - Select the right system level

Decide whether C‑Link should sit at a central DC bus, per‑row distribution, or closer to rack‑level distribution. Central placement simplifies implementation but may reduce effectiveness for very localized, high‑frequency events. - Coordinate with UPS and protection

Ensure the supercapacitor system and UPS control strategies are aligned so that the modules absorb short‑term sags, while UPS and generators handle longer events. Protective device settings (breakers, relays) should be reviewed to avoid unintended interactions under high di/dt conditions. - Mechanical and thermal integration

Take advantage of the flexible mounting options (walls, cable trays, fences) to keep IT floor space free. Verify clearances, cable lengths, and thermal environment so that the modules operate within their specified temperature and ventilation limits. - Monitoring and maintenance

Supercapacitor systems typically require less maintenance than batteries, but their health should still be integrated into facility monitoring. Check which monitoring interfaces (for example SNMP, Modbus, REST APIs) are available from the C‑Link system and plan alarm strategies accordingly. - Lifecycle and TCO considerations

Because supercapacitors can withstand a very high number of cycles, they are particularly suitable for constant AI load smoothing. When performing a total cost of ownership analysis, consider reduced battery cycling, fewer nuisance outages, and deferred grid or UPS upgrades alongside the direct system cost.

For detailed electrical connection diagrams, busbar sizing rules, and protection coordination, Capacitech instructs users to rely on project documentation and datasheets rather than generic rules of thumb.

Source

This article is based on information published by Capacitech Energy about its C‑Link supercapacitor modules for AI and data center applications, supplemented by general industry material on supercapacitors in data centers. All detailed ratings and configuration options should be confirmed against the latest manufacturer datasheets and project documentation.